JSON Array Output from Azure Stream Analytics

The guys at Microsoft Azure surely have done a good job of providing some of the best solutions for common tasks. These tasks seem trivial but I guess that’s not always the case when you get to implementation. You get to realize this your boss tells you to perform statistical analysis live. When I was in school, most of the statistics were done on already know data. There was nothing like moving average then. Now in the current age of technology, having a live stream of data is not only preferred but in many situations compulsory.

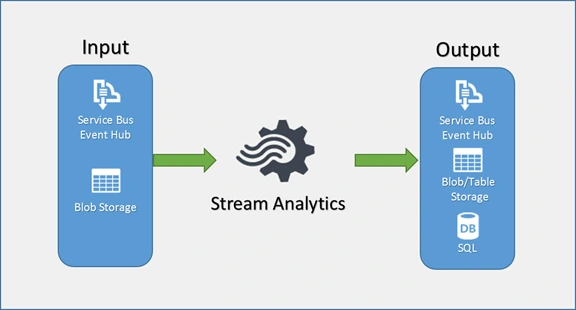

Azure Stream Analytics does a good job at this kind of streaming work when you set up your Streaming Job correctly. You can read more about this here. When you use it, you never care about the operating system or language you have to write your code in or the special format that your data is in. It supports JSON, CSV, etc.

I like to focus on JSON because of how common and resilient it is. Of course, it is not the best in saving on space but those are just a few kilobytes. Space is really cheap these days. However, the output you get from your streaming job can fail to meet your requirements. This is the case when you want JSON output and your data is aggregated every hour or more.

Let us assume you have set up your stream analytics to aggregate your input data every hour and output to Blob Storage in JSON format. The good thing is you do not need code for that at all. Yay! If you have several inputs in one hour, your output should just be a single file with all those input records. According to the JSON spec, you should specify this as an array by enclosing each JSON object within [] (two square brackets). More on that here. With that, the JSON deserializer (in whatever language you use), should understand that as a JSON array. Unfortunately, that will not work with Azure Stream Analytics as of today but it used to earlier. The resulting file will be a file with the starting square bracket and no closing square bracket. This will not work on many JSON deserializer implementations.

How it works

Why does this Stream Analytics behave like so, yet JSON has been a standard all this time? First, let me clarify, the JSON objects within are in good condition. Now on to how Stream Analytics works. When the job is started it begins listening to the inputs in the case of live data like Event Hubs, IoT Hub and those others that may be added in the future. When an event occurs, the job determines what category it will be in depending on the hour.

Say your output format is yyy/MM/DD/HH to produce different files every hour. The streaming job will create a file in blob storage (depending on where you have directed it) with the prefix as the output format. It then acquires a lease and begins to write data. If the output is JSON Array, it will begin with the first square bracket and write the JSON objects as they come from the input. If you look at the file contents, there will be no closing square bracket. According to some Microsoft response, this is by design.

After watching closely, I find that the closing square bracket is added when you move to the next hour. Then the file for the previous hour is closed and the blob lease released before a file for the current hour is created, leased. If your aggregation is not hourly but daily, the behavior will be seen at the day’s end. However, if there is no continuous flow of data into the streaming job, the file change over may not happen.

Solution

If you insist that you have to get JSON Array, then wait for the hour or day (or your chosen aggregation period) to end. This can be done by sorting all the output files by data ascending/descending but skipping the last/first respectively before reading the contents and parsing them. As a result, you shall not have the very latest data when you finish reading. This may be a disadvantage for some implementations.

The Azure team, however, recommends that you should not use a JSON Array. Indeed, from the portal, both the newer and the older portals will allow you set the output format to JSON Array and save but if you check afterward, you will find that it changed to Line Separated. The only way to get the JSON array output is to use Powershell commands and ARM (Azure Resource Manager) JSON templates to get the output setup correctly. Not too tedious but can be done.

On the other hand, you may choose to go with the Line Separated output. The unfortunate part of this approach, is you need to write some code to modify how the file contents, which are no longer in JSON array format, are read into an array of objects. This is easy to do.

Read Line Separated with C#

This was my approach, yours can be different. I used the common JSON library Newtonsoft.Json; official site here, NuGet package here.

First, extend the JsonSerializer class.

public static class JsonSerializerExtensions {

public static IEnumerable<T> DeserializeMultiline<T>(this JsonSerializer serializer, JsonReader reader)

{

var result = new List<T>();

if (!reader.SupportMultipleContent)

reader.SupportMultipleContent = true;

while (reader.Read())

{

var model = serializer.Deserialize<T>(reader);

if (model != null) result.Add(model);

}

return result;

}

}Next, I read the file content:

IEnumerable<MyObject> models;

using (var stream = new MemoryStream())

{

await blob.DownloadToStreamAsync(stream);

stream.Position = 0;

using (var reader = new JsonTextReader(new StreamReader(stream)))

{

models = serializer.DeserializeMultiline<MyObject>(reader);

}

}

return models;That’s it, we’re done. Happy coding guys 😊